It’s now been a whole month since I left Canonical and started working as an independent!

This has been quite the month, both professionally and personally!

In no particular order, this included, setting up a new business, dealing with a somewhat last minute datacenter move (thankfully just one floor down), doing some initial sponsored work, helping out with a LXD fork, selling a house and caring for a sick cat (now all back to normal).

Given everything that’s been happening, I thought I’d use the opportunity to write down some details on the most relevant things I’ve been doing and what to expect moving forward.

Zabbly

Zabbly is the name of the business I’ve registered here in Canada.

I didn’t really like the idea of doing all business moving forward just under my own name as I may want to sub-contract some aspects of it or even have employees down the line.

Having the business part of my life have its own name will make that a fair bit cleaner.

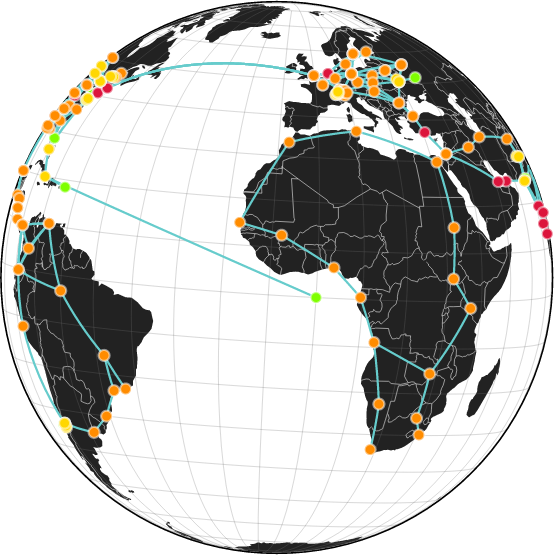

For now, the main things that have been moved over to Zabbly are my organization and IP allocations with ARIN, membership on the Montreal Internet Exchange (QIX) and a number of associated contracts related to AS399760 (my BGP ASN). As part of that, Zabbly is also now listed as the sponsor for all the Linux Containers infrastructure.

Allowing to more clearly separate personal and work-related expenses is going to be another benefit of this move even if legally and from a tax point of view, it’s still all me.

ZFS delegation

An initial bit of sponsored work I got to do this month has been adding support for ZFS delegation to LXD. This makes use of a ZFS 2.2 feature which allows for a dataset to be delegated to a particular user namespace. The ZFS tools can then be used from within that container to create nested datasets or manage snapshots.

This is very exciting as it was the one feature that btrfs had which ZFS offered no equivalent for. It should allow for things like running Docker with the ZFS backend inside of LXD containers, having VPS users be able to create their own datasets, handled their own snapshots and be able to send and receive datasets.

The pull request can be found here: https://github.com/canonical/lxd/pull/12056

Incus

Some of you may have seen the announcement of a new LXD fork called Incus and its subsequent inclusion into the Linux Containers project.

This was quite an exciting development and the LXC team spent quite a bit of time over the past couple weeks chatting with Aleksa and seeing where things were headed.

On my end, I initially helped out trying to make the thing actually pass the testsuite, quite a bit harder than it may sound when dealing with a pretty big codebase and everything having been renamed! I also contributed some ideas of what such a fork may want to change compared to stock LXD.

It’s not often that you get a second chance at designing something like LXD/Incus.

While having a working upgrade path and good backward compatibility is obviously still very important, the fact that anyone migrating will need to deal with some amount of manual work also makes it possible to do away with past mistakes and remove some bits that are seldom used.

I expect I’ll be spending a bunch of my time over the next couple of months helping get Incus into a releasable state. Continuing with the current cleanups, getting the documentation back into shape, putting CI and publishing infrastructure back online (basically re-using what I was once providing to LXD).

The biggest task yet to come is to write tooling and processes to monitor changes happening in Canonical’s LXD and then cherry-pick those into Incus. Again, the hard fork, name and path changes and variety of other changes is going to make that a bit of a challenge but once done, it should make it quite easy to do weekly syncs and reviews of changes.

What’s next

As mentioned, I expect to spend a fair bit of my time over the next few weeks/months helping out with Incus, getting it into shape for an initial release.

For those who enjoyed the LXD YouTube channel, I’m also setting up a new channel that will primarily cover Incus but also some other of my projects: https://www.youtube.com/@TheZabbly.

I’m all set up for contract work and sponsorship now, so if there’s anything you think I can do for you, feel free to reach out at info@zabbly.com.

I’ve also been added to the Github Sponsors program, so if you’d just like to help out with my work on those various projects, that’s available too: https://github.com/sponsors/stgraber

Github

Github Twitter

Twitter LinkedIn

LinkedIn Mastodon

Mastodon